Anthropic is moving to block third-party harnesses from using Claude Code's architecture with non-Anthropic models. If you've been following the OpenClaw project - the open-source fork that let you plug any model into Claude Code's tool-use loop - this is the move that kills it. Third-party model routing through Claude Code's orchestration layer is being shut down.

This shouldn't surprise anyone. But it should alarm you if your AI coding workflow depends entirely on their harness.

I've been saying this for months: the harness is more valuable than the model. Claude Code isn't magic because of Opus. It's magic because of the tool-use loop, the file management, the error recovery, the way it reads a failing test and retries with a different approach. That's orchestration. And Anthropic just made it clear they consider that orchestration their competitive moat - not something they want you running with cheaper models.

Which means if you want model flexibility, cost control, or any independence at all, you need to build your own.

Why I'm Writing This

I run a production orchestration system that has processed over 5,000 AI agent jobs across 6 model families. Blog posts, TikTok video production, code generation, research synthesis, data analysis - all through the same harness layer.

Two different projects tell this story. OpenClaw is the third-party fork of Claude Code that let developers plug any model into its tool-use loop. That's what Anthropic is killing. OpenClaude is something completely different - it's my independent benchmark that tested whether cheap and free models could handle real production tasks when given proper orchestration. OpenClaude doesn't depend on Claude Code's internals at all. It runs on its own harness.

The results were clear. I ran 8 free models from OpenRouter through actual production pipelines - the same ones Opus normally handles. All 8 produced valid TikTok videos end to end. A two-cent model nailed a rate limiter implementation in 13 seconds that Opus took 63 seconds to complete. Four models scored 10/10 on the same test suite.

The harness carried them. Not the model.

OpenClaw's death proves the risk: if your workflow depends on a vendor's harness, they can pull the rug whenever they want. OpenClaude's results prove the escape route works: build your own harness, and the model becomes a plug-in you swap based on cost and task complexity. People who depended on OpenClaw are stuck. My system didn't skip a beat, because it was never built on someone else's foundation.

This is Part 1 of a series on building that foundation yourself.

What a Harness Actually Does

When people say "AI coding tool," they usually mean the model. The chatbot that writes code. That's like saying a car is its engine. The engine matters. But what makes a car useful is everything around it - the transmission, the steering, the brakes, the chassis.

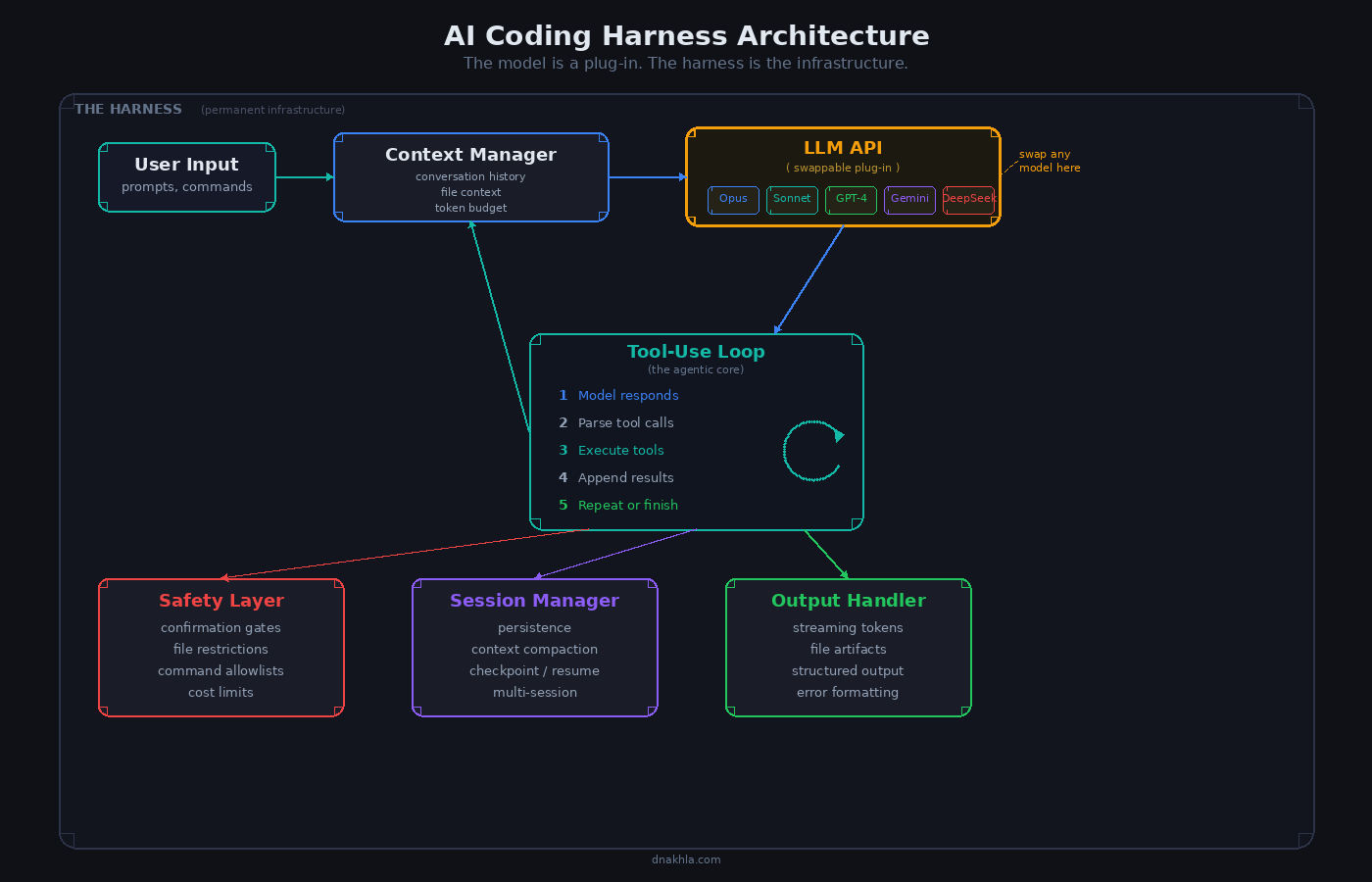

A coding harness is the chassis. Here's what it contains:

Fig. 1 - Full harness architecture. The model is a plug-in; everything else is scaffolding you control.

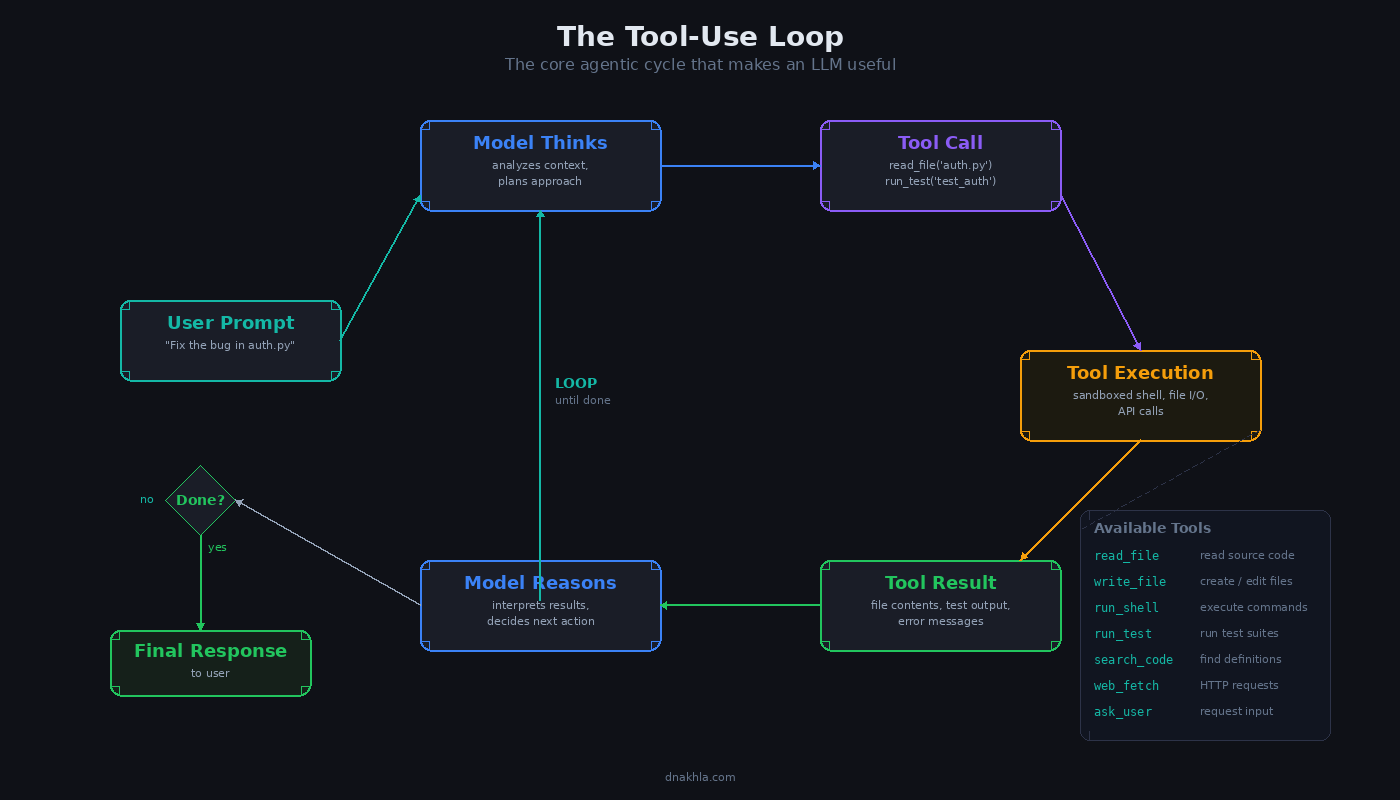

1. The Tool-Use Loop

This is the core. Everything else is infrastructure around this one idea: the model proposes an action, the harness executes it, the result goes back to the model, and it decides what to do next.

User prompt

-> Model thinks, proposes tool call (read file, write file, run command)

-> Harness executes tool call in a sandbox

-> Result returns to model

-> Model proposes next action (or declares done)

-> Loop until task complete or budget exhaustedClaude Code's tool-use loop handles file reads, file writes, shell execution, test running, git operations, and web search. The model never touches the filesystem directly. The harness mediates every interaction, which means the harness controls what the model can and cannot do.

This is where most people stop. They see the loop and think that's the whole product. It's maybe 20% of the work.

Fig. 2 - The tool-use loop. Think, propose, execute, observe, repeat.

2. Context Management

The model has a context window. Every file it reads, every test output it sees, every error message it processes - all of that consumes tokens. A real harness manages this actively:

- File context injection. When the model needs to understand a codebase, you don't dump every file into the prompt. You inject relevant files based on the task, the directory structure, and what the model has already seen.

- Token budgeting. You track how much context you've consumed and make decisions about what to keep and what to summarize. A 200k context window sounds huge until you're debugging across 15 files.

- Conversation compaction. Long sessions accumulate context that becomes stale. The harness periodically summarizes older turns and replaces them with compressed versions, freeing tokens for fresh information.

This is unglamorous work. It's also the difference between a coding agent that works on toy problems and one that works on real codebases. My production system runs a compaction pass when sessions approach 60% of the context window. Not perfect. Token estimation is inherently fuzzy. But without it, sessions die mid-task when the context fills up.

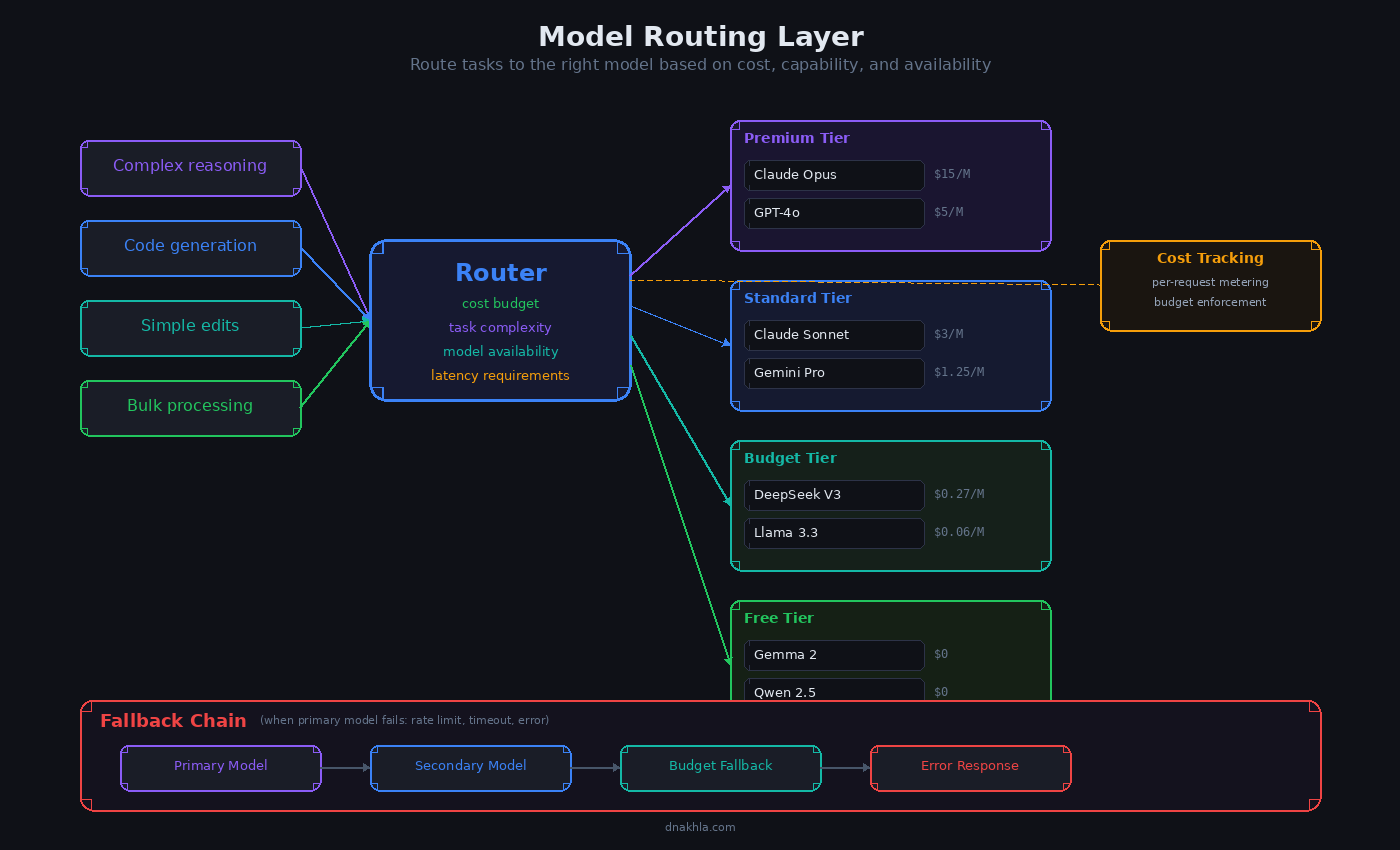

3. Model Routing

Here's where building your own harness pays off immediately. A locked-down harness gives you one model. Your harness gives you all of them.

My production system routes tasks based on what actually needs the expensive option:

| Task Type | Model Tier | Why |

|---|---|---|

| Greenfield implementation | Budget ($0.01-0.02/task) | Well-specified tasks are commodity work |

| Content generation | Mid ($0.05-0.10/task) | Needs voice and judgment, not deep reasoning |

| Debugging, architecture | Premium ($200/mo flat) | Requires multi-step reasoning across code |

| Experimentation | Free | Non-critical, acceptable failure rate |

This isn't theory. I watched four models score 10/10 on the same rate limiter test suite. Costs ranged from $0 to $200/month. For well-defined tasks, the model is interchangeable. For debugging, Opus went 8/8 where the best cheap model got 5/8.

The routing logic doesn't need to be complicated. Task type plus a cost threshold gets you 80% of the value. But you can't do it at all if your harness only speaks to one provider.

Fig. 3 - Model routing by task type. Most teams overpay for premium on tasks that don't need it.

4. Safety Layer

An unsupervised coding agent with shell access is a loaded weapon. The safety layer is the trigger guard.

- Confirmation gates. Destructive operations -

rm -rf,git push --force, dropping database tables - require explicit approval before execution. - File restrictions. The agent can read anything but can only write to designated directories. No modifying system files, no touching other projects.

- Command allowlists. Shell execution is filtered. The model can run tests and build commands. It cannot

curlarbitrary URLs or install packages without approval. - Rollback capability. Every file write is tracked. If the agent goes sideways, you can revert to the pre-task state in one command.

Every critical safety rule in my system traces back to a real incident. Not hypothetical best practices - actual things that went wrong. Something breaks, you write a one-line rule, and it becomes permanent policy. The system learns from its mistakes because you encode them where they cannot be forgotten.

5. Session Management

A single coding task might take 30 seconds. A complex debugging session might take 20 minutes across multiple model calls. Session management handles the lifecycle:

- Persistent sessions. A worker picks up a task and maintains its session across multiple interactions. Context accumulates. The model builds understanding of the codebase over time rather than starting fresh every call.

- Session reuse. When a new task arrives for the same project, the harness routes it to an existing session that already has the relevant context loaded. This saves tokens and time.

- Compaction checkpoints. Before a session gets too long, the harness writes a checkpoint summarizing current state, key decisions, and pending work. If the session needs to restart, it reads the checkpoint instead of replaying the full history.

- Graceful timeout. If a task runs too long or the model gets stuck in a loop, the harness kills the session cleanly and reports what happened.

My production engine ticks every 10 seconds, managing a pool of workers across multiple projects. Workers persist between jobs. A worker that just finished a blog post already has the writing style context loaded - it doesn't rebuild that understanding from scratch on the next task.

6. Output Handling

The model produces text. The harness turns that into useful artifacts: code written to files (not stdout), automatic test execution with results fed back for iteration, structured output validation for specific formats, and streaming for interactive use. The harness decides what goes where based on project structure and task type.

What This Looks Like in Production

When I say "production," I mean this:

- 5,000+ autonomous agent jobs completed

- 6 model families (Claude, Gemini, Kimi, DeepSeek, Qwen, Meta Llama)

- 20 concurrent worker slots

- Cron-scheduled jobs firing on daily cadence

- Quality gates catching bad output before it ships

- Automatic retry and re-routing on model failures

The engine dispatches 150+ jobs per day. Each job runs in a forked child process so the main loop never blocks. If a job hangs, the supervisor keeps ticking. Jobs enter from three paths: messages that get triaged and decomposed, cron schedules that fire at configured times, and planning passes that break complex requests into dependency chains.

None of this is model-dependent. The same engine runs Claude, Gemini, Kimi, and free OpenRouter models. The model is a parameter, not an assumption.

That's the whole point.

What Comes Next

This is Part 1 - the architecture overview. You now know what the six components are and why each one matters. Future posts in this series will go deep on implementation:

- Part 2: The Tool-Use Loop - Building the core action-execution-feedback cycle. How to implement tool calls, sandbox execution, and the retry logic that turns a single model call into an effective agent.

- Part 3: Context Management - Token budgeting, file injection strategies, and conversation compaction. The boring work that makes everything else possible.

- Part 4: Model Routing - Multi-provider integration, fallback chains, cost optimization, and the routing rules that emerged from 5,000+ production jobs.

- Part 5: Safety and Sessions - Confirmation gates, file restrictions, persistent sessions, and the incident-driven approach to building safety rules.

Anthropic built the best harness in the industry and attached it to their best model. Everyone assumed the model was the magic. Then the harness leaked, and it turned out the orchestration was doing most of the heavy lifting. Now they're locking it down.

The lesson isn't "Claude Code is bad." It's good. It's really good. The lesson is that depending on a single vendor for your entire AI coding workflow is a strategic mistake. The model will change. The pricing will change. The terms will change. The one thing you can control is the harness.

Danny Nakhla runs production AI orchestration systems processing thousands of autonomous agent jobs across multiple model families. He writes about what actually works at dnakhla.com.